Welcome to the seventh post (0-6) of a series that will show you how you can write a Microsoft Teams Application with Blazor Webassembly.

You will find the complete source code in this github repository. I placed my changes in different branches so you can easily jump in at any time.

In this post we will extend our library, so that we are able to create access tokens to access the Microsoft Graph.

Extending our typescript library

We create a new method that will take the ClientAuthToken and scopes of apis we want to access. We obtain the Tenant-ID from the MS-Teams context and send this to our backend (/auth/token) which will use application (client)id and client secret to create an access token:

GetServerToken(clientSideToken: string, scopes: string[]): Promise<string> {

try {

const promise = new Promise<string>((resolve, reject) => {

microsoftTeams.getContext((context) => {

fetch('/auth/token', {

method: 'post',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({

'tid': context.tid,

'token': clientSideToken,

'scopes': scopes

}),

mode: 'cors',

cache: 'default'

})

.then((response) => {

if (response.ok) {

return response.json();

} else {

reject(response.statusText);

}

})

.then((responseJson) => {

if (responseJson.error) {

reject(responseJson.error);

} else {

const serverSideToken = responseJson;

console.log(serverSideToken);

resolve(serverSideToken);

}

});

});

});

return promise;

}

catch (err) {

alert(err.message);

}

}

Since we do want to call this method from our Blazor components we add additional methods to our JavaScript-Wrapper interface/class:

ITeamsClient

using System.Threading.Tasks;

namespace SpectoLogic.Blazor.MSTeams

{

public interface ITeamsClient

{

ValueTask<string> GetClientToken();

ValueTask<string> GetServerToken(string clientToken, string[] scopes);

ValueTask<string> GetUPN();

}

}

TeamsClient

using Microsoft.JSInterop;

using System.Threading.Tasks;

namespace SpectoLogic.Blazor.MSTeams

{

public class TeamsClient : ITeamsClient

{

public TeamsClient(IJSRuntime jSRuntime)

{

_JSRuntime = jSRuntime;

}

private readonly IJSRuntime _JSRuntime;

public ValueTask<string> GetUPN() =>

_JSRuntime.InvokeAsync<string>(MethodNames.GET_UPN_METHOD);

public ValueTask<string> GetClientToken() =>

_JSRuntime.InvokeAsync<string>(MethodNames.GET_CLIENT_TOKEN_METHOD);

public ValueTask<string> GetServerToken(

string clientToken, string[] scopes) => _JSRuntime.InvokeAsync<string>(MethodNames.GET_SERVER_TOKEN_METHOD, clientToken, scopes);

private class MethodNames

{

public const string GET_UPN_METHOD

= "BlazorExtensions.SpectoLogicMSTeams.GetUPN";

public const string GET_CLIENT_TOKEN_METHOD

= "BlazorExtensions.SpectoLogicMSTeams.GetClientToken";

public const string GET_SERVER_TOKEN_METHOD

= "BlazorExtensions.SpectoLogicMSTeams.GetServerToken";

}

}

}

Extend our Blazor Library

Add the following nuget packages to SpectoLogic.Blazor.MSTeams.csproj

- Microsoft.Extensions.Http

- System.Text.Json

Create a new subfolder auth within the project and add following classes:

TokenResponse.cs

This class reassembles the token reference we get back from the OAuth endpoint.

using System.Text.Json.Serialization;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public class TokenResponse

{

[JsonPropertyName("token_type")]

public string TokenType { get; set; }

[JsonPropertyName("scope")]

public string Scope { get; set; }

[JsonPropertyName("expires_in")]

public int ExpiresIn { get; set; }

[JsonPropertyName("ext_expires_in")]

public int ExtExpiresIn { get; set; }

[JsonPropertyName("access_token")]

public string AccessToken { get; set; }

}

}

TokenError.cs

This class reassembles an error that we might get back from the OAuth endpoint. If for example we did not grant consent to our tenant and the user has not granted consent so far we will retrieve an error like ‚invalid_grant‘.

using System.Text.Json.Serialization;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public class TokenError

{

/// <summary>

/// May include things like "invalid_grant"

/// </summary>

[JsonPropertyName("error")]

public string Error { get; set; }

[JsonPropertyName("error_description")]

public string Description { get; set; }

[JsonPropertyName("error_codes")]

public int[] Codes { get; set; }

[JsonPropertyName("timestamp")]

public string Timestamp { get; set; }

[JsonPropertyName("trace_id")]

public string TraceId { get; set; }

[JsonPropertyName("correlation_id")]

public string CorrelationId { get; set; }

[JsonPropertyName("error_uri")]

public string ErrorUri { get; set; }

}

}

ITokenProvider.cs

The interface for our server-side helper class that encapsulates the retrieval of the access token.

using System.Threading.Tasks;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public interface ITokenProvider

{

Task<TokenResponse> GetToken(

string tenantId,

string token,

string clientId,

string clientSecret,

string[] scopes);

}

}

ITokenErrorException.cs

An exception that will be thrown by the TokenProvider implementation, if we receive an error response from the OAuth Endpoint.

using System;

using System.Runtime.Serialization;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public class TokenErrorException : Exception

{

public TokenError TokenError { get; set; }

public TokenErrorException()

{

}

public TokenErrorException(TokenError error) : base(error.Error)

{

TokenError = error;

}

public TokenErrorException(TokenError error, Exception innerException) : base(error.Error, innerException)

{

TokenError = error;

}

protected TokenErrorException(SerializationInfo info, StreamingContext context) : base(info, context)

{

}

}

}

Tokenprovider.cs

The implementation of our token provider accessing the Token-Endpoint. Future implementations should use the MSAL-Library from Microsoft, but for illustration purposes this should suffice:

using System.Collections.Generic;

using System.Net.Http;

using System.Text.Json;

using System.Threading.Tasks;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public class TokenProvider : ITokenProvider

{

private readonly IHttpClientFactory _clientFactory;

public TokenProvider(IHttpClientFactory clientFactory)

{

_clientFactory = clientFactory;

}

public async Task<TokenResponse> GetToken(

string tenantId,

string token,

string clientId,

string clientSecret,

string[] scopes)

{

string requestUrlString =

$"https://login.microsoftonline.com/{tenantId}/oauth2/v2.0/token";

Dictionary<string, string> values = new Dictionary<string, string>

{

{ "client_id", clientId },

{ "client_secret", clientSecret },

{ "grant_type", "urn:ietf:params:oauth:grant-type:jwt-bearer" },

{ "assertion", token },

{ "requested_token_use", "on_behalf_of" },

{ "scope", string.Join(" ",scopes) }

};

FormUrlEncodedContent content = new FormUrlEncodedContent(values);

HttpClient client = _clientFactory.CreateClient();

HttpResponseMessage resp = await client.PostAsync(

requestUrlString, content);

if (resp.IsSuccessStatusCode)

{

string respContent = await resp.Content.ReadAsStringAsync();

return JsonSerializer.Deserialize<TokenResponse>(respContent);

}

else

{

string respContent = await resp.Content.ReadAsStringAsync();

TokenError tokenError =

JsonSerializer.Deserialize<TokenError>(respContent);

throw new TokenErrorException(tokenError);

}

}

}

}

TokenProviderExtensions

And finally some class to implement the extension method which can be used by the server project to provide the classes via DI.

using Microsoft.Extensions.DependencyInjection;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public static class TokenProviderExtensions

{

public static IServiceCollection AddTokenProvider(

this IServiceCollection services)

=> services.AddScoped<ITokenProvider, TokenProvider>()

.AddHttpClient();

}

}

Use the library in our Blazor-Backend

Now let’s use this library in our Backend to implement the /auth/token service.

First of all add a reference of our razor library to the BlazorTeamTab.Server project.

Extend Startup.cs to call the extension method that will provider the necessary classes via DI:

...

using SpectoLogic.Blazor.MSTeams.Auth; // Add this line

...

services.AddRazorPages();

services.AddTokenProvider(); // Add this line

...

Add a folder Dtos and add the class TokenRequest to it. This reassembles the structure we receive from our typescript implementation:

using System.Text.Json.Serialization;

namespace SpectoLogic.Blazor.MSTeams.Auth

{

public class TokenRequest

{

[JsonPropertyName("tid")]

public string Tid { get; set; }

[JsonPropertyName("token")]

public string Token { get; set; }

[JsonPropertyName("scopes")]

public string[] Scopes { get; set; }

}

}

Under Server/Controllers create a new file AuthController.cs

We add ClientID/ClientSecret from our AAD-Application hardcoded! Do NOT do that in production code! Never store secrets in code! Use Azure KeyVault or other more secure locations.

using Microsoft.AspNetCore.Mvc;

using Microsoft.Extensions.Logging;

using SpectoLogic.Blazor.MSTeams.Auth;

using System;

using System.Threading.Tasks;

namespace BlazorTeamApp.Server.Controllers

{

[ApiController]

[Route("[controller]/[action]")]

public class AuthController : ControllerBase

{

private readonly ILogger<AuthController> logger;

private readonly ITokenProvider tokenProvider;

public AuthController(

ILogger<AuthController> logger,

ITokenProvider tokenProvider)

{

this.logger = logger;

this.tokenProvider = tokenProvider;

}

[HttpPost]

[ActionName("token")]

public async Task<IActionResult> Token(

[FromBody] TokenRequest tokenrequest)

{

string clientId = "e2088d72-79ce-4543-ab3c-0988dfecf2f1";

// From Step 4

string clientSecret = "_2xb_7h-P1voxOddv4jzaE8I_l-Tx71BlH";

// From Step 4

try

{

TokenResponse tokenResponse = await tokenProvider.GetToken(

tokenrequest.Tid,

tokenrequest.Token,

clientId,

clientSecret,

tokenrequest.Scopes);

return new JsonResult(tokenResponse.AccessToken);

}

catch (TokenErrorException tokenErrorEx)

{

return new JsonResult(tokenErrorEx.TokenError);

}

catch (Exception)

{

throw;

}

}

}

}

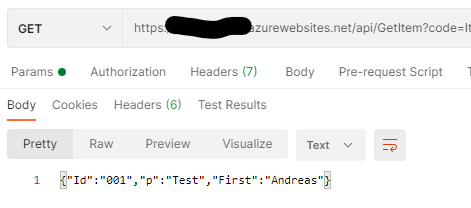

Access Microsoft Graph from Blazor-Page

Since we do want to do HTTP-Requests lets add the HTTP-Client in Startup.cs

using SpectoLogic.Blazor.MSTeams;

...

builder.Services.AddMSTeams();

builder.Services.AddHttpClient(); // add this line!

await builder.Build().RunAsync();

...

Now implement Tab.razor page. We inject the HttpClientFactory and add two new methods to handle our two new buttons.

One will receive the server auth token for Microsoft Graph, where the other will use that token to fetch some basic user informations from the Graph.

@page "/tab"

@inject SpectoLogic.Blazor.MSTeams.ITeamsClient TeamsClient;

@inject IHttpClientFactory HttpFactory;

<h1>Counter</h1>

<p>Current count: @currentCount</p>

<p>Current User: @userName</p>

<p>AuthToken: @authToken</p>

<p>Server Token: @authServerToken</p>

<p>User Data: @userData</p>

<button class="btn btn-primary" @onclick="IncrementCount">Click me</button>

<button class="btn btn-primary" @onclick="GetUserName">Get UserName</button>

<br />

<button class="btn btn-light" @onclick="GetAuthToken">Get Auth-Token</button>

<button class="btn btn-light" @onclick="GetServerAuthToken">Get Server-Token</button>

<button class="btn btn-light" @onclick="GetUserData">Get User Data</button>

@code {

private int currentCount = 0;

private string userName = string.Empty;

private void IncrementCount()

{

currentCount++;

}

private async Task GetUserName()

{

userName = await TeamsClient.GetUPN();

}

private string authToken = string.Empty;

private async Task GetAuthToken()

{

authToken = await TeamsClient.GetClientToken();

}

private string authServerToken = string.Empty;

private async Task GetServerAuthToken()

{

try

{

authServerToken = await TeamsClient.GetServerToken(

authToken,

new string[1] { "https://graph.microsoft.com/User.Read" });

}

catch (Exception ex)

{

authServerToken = $"Fehler: {ex.Message}";

}

}

private string userData = string.Empty;

private async Task GetUserData()

{

try

{

var client = HttpFactory.CreateClient();

HttpRequestMessage request = new HttpRequestMessage()

{

Method = HttpMethod.Get,

// https://graph.microsoft.com/v1.0/me/mailFolders('Inbox')/messages?$select=sender,subject&$top=2

RequestUri = new Uri("https://graph.microsoft.com/v1.0/me/")

};

request.Headers.Authorization = new System.Net.Http.Headers.AuthenticationHeaderValue("Bearer", authServerToken);

authToken = $"Bearer {authServerToken}";

var response = await client.SendAsync(request);

userData = await response.Content.ReadAsStringAsync();

}

catch (Exception ex)

{

userData = $"Fehler: {ex.Message}";

}

}

}

In our final post for this series tomorrow, we will secure the Weatherforecast API and reuse what we have learned so far to access the secured service.

That’s it for today! See you tomorrow!

AndiP