Welcome to the fifth post (0-4) of a series that will show you how you can write a Microsoft Teams Application with Blazor Webassembly.

You will find the complete source code in this github repository. I placed my changes in different branches so you can easily jump in at any time.

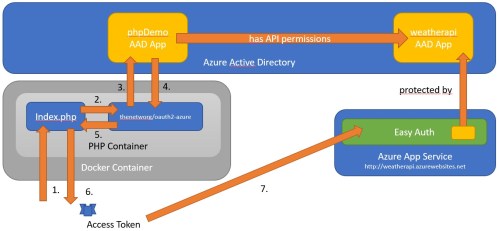

Fetching access tokens,…

To create some access tokens we first need to create an Azure Active Directory Application Registration for our Blazor-Tab Application.

Therefore navigate to https://portal.azure.com and select your tenant. Then switch to Azure Active Directory and select App registrations from the left panel. You can navigate there directly by using this link.

AAD App Registration

Click +New registration to register a new AAD application

- Name: BlazorApp

- Account Type: Accounts in any organizational directory (Mulitenant)

- Redirect Uri: Leave that empty for now

and click Register.

Make sure to copy your Application (Client) ID and store it for later. I am using the following Client-ID throughout the samples which need to be replaced with yours: a97275e3-965d-4076-90dd-76cce240e8fb

IMPORTANT

Microsoft Teams will only allow AAD Application Scopes/Redirect URLs that are in the premitted domain. Make sure that you use your NGROK or registered domain here!

Select Expose an API under the Manage section and set an application ID Uri. You can reuse the given UID from the proposed App ID Uri. For example:

- api://demo.ngrok.io/a97275e3-965d-4076-90dd-76cce240e8fb

Now select +Add a scope to add the required "access_as_user" scope:

- Scope Name : access_as_user

- Who can consent: Admins and Users

- Provide some titel/text for the information that is displayed when the administrator of a tenant or a user is asked to grant consent.

Finally click Add scope to add the scope. You will get an url that looks like this:

- api://demo.ngrok.io/a97275e3-965d-4076-90dd-76cce240e8fb/access_as_user

To directly allow Teams to access we add the well known client IDs of the Microsoft Teams-Applications. There are two of them: Microsoft Teams Web Client and Microsoft Teams Desktop Client

Use the +Add a client application button to add the following client ids. You need to also select the scope "access_as_user" so that the Teams-Apps can access this specific scope!

- Teams Web Application: 5e3ce6c0-2b1f-4285-8d4b-75ee78787346

- Teams Mobile/Desktop App: 1fec8e78-bce4-4aaf-ab1b-5451cc387264

Since we also want to create further access tokens for Microsoft Graph and our own API with this AAD-Application we create a client secret that we will use to create those tokens. Make sure that the client secret is never stored in a client application! It should always be in a trusted environment.

Click on Certificates & Secrets under the Manage section and create a new client secret by selecting +New client secret

Also copy that client secret. I am using this one (it is invalid now, of course):

- B._12b.spZ391IGzr-Yg0T09Ug9D1erP.f

Click on API permissions under the Manage section to add permissions to access the graph. We do want to add delegated permissions (permissions in the context of the user). Add the following permissions, if not already defined:

- User.Read

- offline_access

- OpenId

- profile

In case you are interessted in knowing more about the offline_access permission, you can read this article.

Afer clicking save make sure to click Grant admin consent for … button to grant these permissions within this tenant. So all users of this tenant do not have to explicitly consent to your MS-Teams App.

Finally select Authentication under the Manage section to add a redirect url for access tokens. We will add the server part later!

- Click +Add a platform and select Web

- Add a redirect Url to your application: https://demo.ngrok.io/auth-end

- Scroll down to Implicit grant section and make sure that we can obtain the tokens from the authorization endpoint. Select both options:

- ID-Token (Checked)

- Access-Token (Checked)

Update your Teams Application Manifest and redeploy

After we have registered our AAD Application we want to configure our MS-Teams App to use it.

Open your Visual Studio project and click CTRL+Q to get to the general search. Type "teams" to get the option to view the Microsoft Teams Tool Kit.

As before click edit app-package and select development (some uid). Under Domains and permissions set:

- AAD App ID: previously created app id: a97275e3-965d-4076-90dd-76cce240e8fb

- Single Sign On: previously created app uri: api://demo.ngrok.io/a97275e3-965d-4076-90dd-76cce240e8fb

Click Update to update the application package.

This will add this section to the manifest:

...

],

"validDomains": [],

"webApplicationInfo": {

"id": "a97275e3-965d-4076-90dd-76cce240e8fb",

"resource": "api://demo.ngrok.io/a97275e3-965d-4076-90dd-76cce240e8fb"

}

}

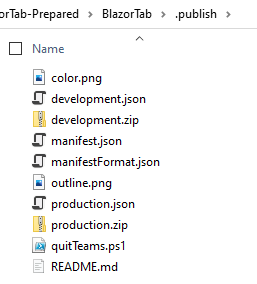

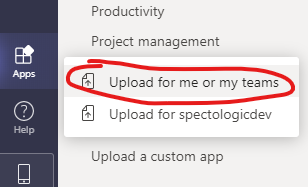

Make sure to use your own AppID/URIs and remove your app from teams and redeploy the app with the new development.zip file!

Getting the Auth-Token

Now let’s write some code to get the Auth-Token that we can later use to get access tokens for MS-Graph and our own API.

First add this javascript code to the index.html page. Again we use the MS-Teams SDK to get the auth token.

function getClientSideToken() {

try {

return new Promise((resolve, reject) => {

console.log("Get auth token from Microsoft Teams");

microsoftTeams.authentication.getAuthToken({

successCallback: (result) => {

resolve(result);

},

failureCallback: function (error) {

alert(error);

reject("Error getting token: " + error);

}

});

});

}

catch (err) {

alert(err.message);

}

}

From Blazor we can call this function as we did before by adding a new button and a call to the Javascript Runtime:

...

<p>Current User: @userName</p>

<p>AuthToken: @authToken</p>

...

<button class ="btn btn-primary" @onclick="IncrementCount">Click me</button>

...

<button class ="btn btn-primary" @onclick="GetUserName">Get UserName</button>

<br />

<button class="btn btn-light" @onclick="GetAuthToken">Get Auth-Token</button>

@code {

...

private async Task GetUserName()

{

userName = await JSRuntime.InvokeAsync<string>("GetUPN");

}

private string authToken = string.Empty;

private async Task GetAuthToken()

{

authToken = await JSRuntime.InvokeAsync<string>("getClientSideToken");

}

...

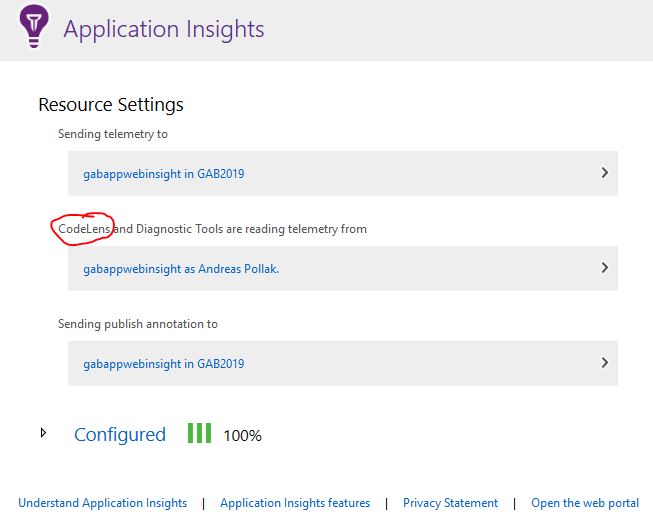

Rebuild your application and run it in Visual Studio Debugger. Make sure you have updated your Teams app, then start teams and reload your personal tab. Initialize the Teams-SDK and then try to get the auth token.

Copy the authtoken and paste it to a https://jwt.ms or https://jwt.io to examine the auth token.

That’s it for today! See you tomorrow!

AndiP